Introduction & Main Themes

I attended the AI Developer Conference in San Francisco from April 28 to 29, and I wanted to capture my notes here. The main theme of the two-day event was software development in the new GenAI age, specifically focusing on coding agents and the future of software engineering.

I observed a few main themes throughout the event:

- The evolution of coding agents and the software development lifecycle.

- Memory, context engineering, and harness engineering, which were discussed multiple times with slightly different definitions.

- The “observability flywheel” concept championed by LangChain founder Harrison Chase. He described it across four phases: build, test, deploy, and monitor, with the “trace” sitting at the center to observe and improve the flywheel.

- Human skill development and helping developers adapt to this fast-changing era, this aligns to the organizer deeplearning.ai which is an education platform.

- An observer’s view of the AI startup and industry landscape based on the sponsors and speakers present.

The Shift in Software Engineering

A lot of discussions focused on how coding agents are managing the entire software lifecycle. For example, CodeRabbit showcased their AI code review platform. The recurring challenge is that coding agents are inherently stateless—they are like a new employee for every single session. The consensus is that reasoning is no longer the hardest part; the real challenge is context engineering, or giving the agent enough good context to do its job.

Andrew Ng’s keynote expanded on this shift. He explained that software is now built using two different types of building blocks: AI blocks (like LLMs and agent workflows) and non-AI blocks (like traditional UIs and databases). Mastering both is the new core competency.

He also advocated for a goal of 100% AI coding. The rationale is that even if you write 80% of your code with AI, manually writing the remaining 20% might take significantly longer. Identifying the bottleneck in that final 20%—perhaps a lack of context—is crucial. Because AI has made writing code so fast, bottlenecks are shifting to other areas of the lifecycle like product management, legal, design, and marketing. Small, AI-native teams where everyone has cross-functional skills can overcome these hurdles much faster than traditional corporate teams.

Ng outlined the evolving job description for an AI engineer:

- Becoming an “agent manager” who orchestrates workflows.

- Mastering the integration of AI and non-AI building blocks.

- Developing strong product management and product sense to handle the new non-coding bottlenecks.

While many predict a “job apocalypse,” Ng is highly optimistic and focuses on augmentation and new job creation. Personally, I feel the truth is somewhere in the middle; while we aren’t facing total replacement, we are seeing short-term job eliminations. Ng also announced two products targeting “parallel skill development” : Context Hub, an open-source library allowing agents to fetch fresh contexts like the latest API docs, and Codream, a human-focused learning tool using executable JavaScript and video integration.

Harness Engineering vs. Context Engineering

There was an interesting debate around mental models for agents. Harrison Chase described the “harness” as the features the model cannot deliver on its own. He outlined six desired agent capabilities:

- Working durably with real data (requiring file systems and Git).

- Writing and executing code (requiring bash).

- Safe execution and default testing (requiring a sandbox environment).

- Remembering and accessing new knowledge (requiring memory, web search, or MCPs).

- Maintaining performance over a long context (requiring compaction).

- Completing long-horizon work (requiring a ReAct loop, planning, and verification).

LangChain has open-sourced a codebase called “Deep Agents” to address this. On the other hand, Mike Chambers from AWS argued that the “loop” was never the hard part—the “harness” is. He defines the harness through session isolation, context management, memory persistence, sandbox execution, and observability. We’re seeing hyperscalers like AWS build massive tools like Bedrock AgentCore, while startups focus on the granular hard parts, drawing a strong parallel to how the industry built microservices and cloud-native applications.

Deep Dive: Context Engineering & Agentic Search

Context engineering is a massive bottleneck. Chroma’s CEO, Jeff Huber, used a biological analogy: the prefrontal cortex (System 2) is slow, while the rest of the brain (System 1) is fast. Agents need to balance reading and writing context to prevent “context rot”.

Huber made a great distinction: “harness engineering” acts as the tool allowing the agent to do its own context engineering, whereas traditional context engineering is done by humans orchestrating from the outside. Chroma showcased “Context 1,” a model specialized entirely in fast, agentic search. He noted that balancing “explore and exploit” in agent search remains an unsolved issue. Huber also offered three predictions for the future of context engineering:

- It will be continuous (dynamically pushed and pulled).

- It needs to be fast.

- It requires continual learning.

Brandon Waselnuk from Unblocked drove the context point home perfectly: “Your coding agent only sees your code, but your team’s architectural decisions live in Slack and Jira”. Unblocked treats agents like it’s their “day one on the job” and builds their context engine on six pillars:

- Unifying system context.

- Conflict resolution.

- Targeted retrieval.

- Data governance.

- Token optimization.

- Personalization and relevance.

Their approach to conflict resolution is particularly interesting. When retrieved context is contradictory, they use a “social graph builder”. If a certain engineer is the integration test expert, their documents on the topic should naturally take precedence over other sources—just like a human would intuit.

The AI Startup Landscape

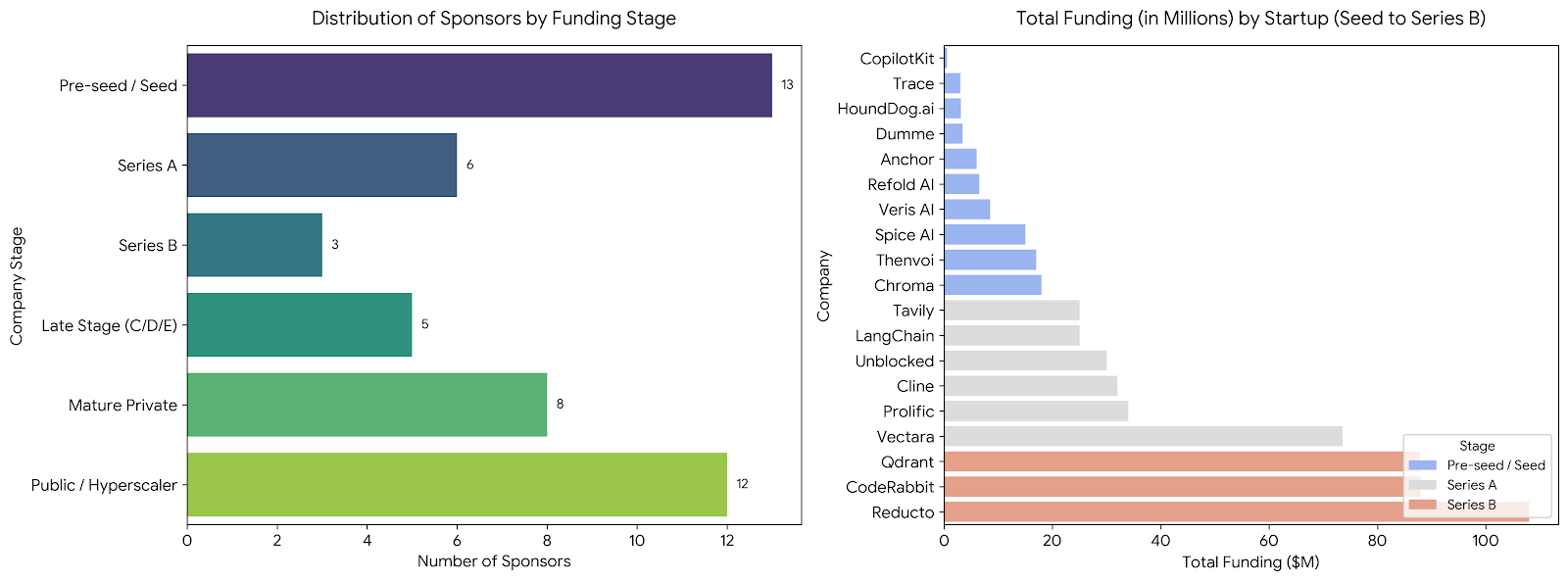

Looking at the 70 sponsors served as a great survey of the industry. My breakdown showed about 13 pre-seed and seed companies, 6 in Series A, 3 in Series B, 5 in later stages, and roughly 8 mature private companies. Funding is heavily flowing into document parsing, RAG/databases, agentic code review, and orchestration.

Three specific startups stood out to me:

- Band AI (Thenvoi): Fascinating approach, essentially building a “Slack for agents” to handle multi-agent systems.

- AI21: Their session, “End to Manual Tinkering,” introduced the Maestro framework. They argue that instead of manually tuning parameters, you can introduce a neural network to model it for massive gains. You can only pick two from latency, cost, and accuracy, but Maestro uses a two-stage process (offline action model training, and inference-time runtime action selection) to balance them dynamically via a heterogeneous ensemble.

- Veris.AI: They focus on simulation and sandboxes. I have doubts about their scalability. Their demo was a simple medical triage agent using the Epic API. For complicated enterprise agents, I am skeptical they can achieve high-fidelity simulation just by requiring a data schema. But overall, this is a promising area.